How To Read Hdfs File In Pyspark

How To Read Hdfs File In Pyspark - Before reading the hdfs data, the hive metastore server has to be started as shown in. Web from hdfs3 import hdfilesystem hdfs = hdfilesystem(host=host, port=port) hdfilesystem.rm(some_path) apache arrow python bindings are the latest option (and that often is already available on spark cluster, as it is required for pandas_udf): Reading is just as easy as writing with the sparksession.read… Using spark.read.json (path) or spark.read.format (json).load (path) you can read a json file into a spark dataframe, these methods take a hdfs path as an argument. To do this in the ambari console, select the “files view” (matrix icon at the top right). Web 1 answer sorted by: Web write & read json file from hdfs. Web reading a file in hdfs from pyspark 50,701 solution 1 you could access hdfs files via full path if no configuration provided. Web how to read and write files from hdfs with pyspark. In this page, i am going to demonstrate how to write and read parquet files in hdfs…

Before reading the hdfs data, the hive metastore server has to be started as shown in. Import os os.environ [hadoop_user_name] = hdfs os.environ [python_version] = 3.5.2. Web 1 answer sorted by: Steps to set up an environment: Set up the environment variables for pyspark… To do this in the ambari console, select the “files view” (matrix icon at the top right). Web in my previous post, i demonstrated how to write and read parquet files in spark/scala. From pyarrow import hdfs fs = hdfs.connect(host, port) fs.delete(some_path, recursive=true) Reading is just as easy as writing with the sparksession.read… Spark provides several ways to read.txt files, for example, sparkcontext.textfile () and sparkcontext.wholetextfiles () methods to read into rdd and spark.read.text () and spark.read.textfile () methods to read.

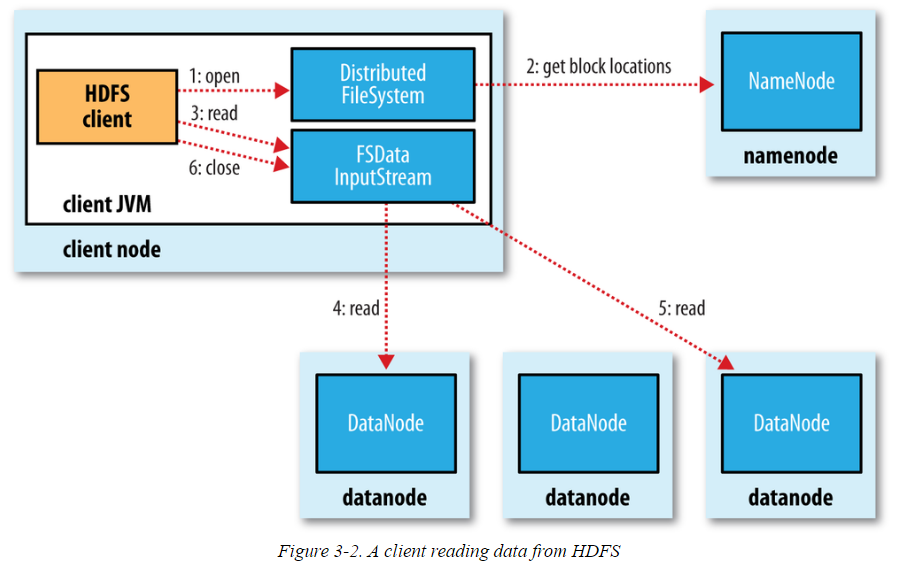

Reading csv file using pyspark: Similarly, it will also access data node 3 to read the relevant data present in that node. In this page, i am going to demonstrate how to write and read parquet files in hdfs… Some exciting updates to our community! The path is /user/root/etl_project, as you've shown, and i'm sure is also in your sqoop command. Web filesystem fs = filesystem. Web from hdfs3 import hdfilesystem hdfs = hdfilesystem(host=host, port=port) hdfilesystem.rm(some_path) apache arrow python bindings are the latest option (and that often is already available on spark cluster, as it is required for pandas_udf): Reading is just as easy as writing with the sparksession.read… From pyarrow import hdfs fs = hdfs.connect(host, port) fs.delete(some_path, recursive=true) Before reading the hdfs data, the hive metastore server has to be started as shown in.

How to read an ORC file using PySpark

Some exciting updates to our community! Web write & read json file from hdfs. Web how to write and read data from hdfs using pyspark | pyspark tutorial dwbiadda videos 14.2k subscribers 6k views 3 years ago pyspark tutorial for beginners welcome to dwbiadda's pyspark. Web spark can (and should) read whole directories, if possible. Before reading the hdfs data,.

Using FileSystem API to read and write data to HDFS

Web 1.7k views 7 months ago. Web filesystem fs = filesystem. The path is /user/root/etl_project, as you've shown, and i'm sure is also in your sqoop command. (namenodehost is your localhost if hdfs is located in local environment). Web reading a file in hdfs from pyspark 50,701 solution 1 you could access hdfs files via full path if no configuration.

How to read json file in pyspark? Projectpro

Read from hdfs # read from hdfs df_load = sparksession.read.csv ('hdfs://cluster/user/hdfs… Web reading a file in hdfs from pyspark 50,701 solution 1 you could access hdfs files via full path if no configuration provided. Web 1 answer sorted by: The parquet file destination is a local folder. Web in this spark tutorial, you will learn how to read a text.

Reading HDFS files from JAVA program

Add the following code snippet to make it work from a jupyter notebook app in saagie: Steps to set up an environment: Web # read from hdfs df_load = sparksession.read.csv('hdfs://cluster/user/hdfs/test/example.csv') df_load.show() how to use on data fabric? In this page, i am going to demonstrate how to write and read parquet files in hdfs… Playing a file in hdfs with.

Anatomy of File Read and Write in HDFS

Some exciting updates to our community! Web how to read a file from hdfs? (namenodehost is your localhost if hdfs is located in local environment). This video shows you how to read hdfs (hadoop distributed file system) using spark. Web spark can (and should) read whole directories, if possible.

How to read CSV files using PySpark » Programming Funda

In this page, i am going to demonstrate how to write and read parquet files in hdfs… Web # read from hdfs df_load = sparksession.read.csv('hdfs://cluster/user/hdfs/test/example.csv') df_load.show() how to use on data fabric? Web in this spark tutorial, you will learn how to read a text file from local & hadoop hdfs into rdd and dataframe using scala examples. Reading csv.

DBA2BigData Anatomy of File Read in HDFS

Web 1 answer sorted by: Web table of contents recipe objective: This video shows you how to read hdfs (hadoop distributed file system) using spark. How can i find path of file in hdfs. Reading is just as easy as writing with the sparksession.read…

Hadoop Distributed File System Apache Hadoop HDFS Architecture Edureka

How can i read part_m_0000. In this page, i am going to demonstrate how to write and read parquet files in hdfs… Before reading the hdfs data, the hive metastore server has to be started as shown in. (namenodehost is your localhost if hdfs is located in local environment). Web table of contents recipe objective:

什么是HDFS立地货

Steps to set up an environment: Web filesystem fs = filesystem. Reading is just as easy as writing with the sparksession.read… Navigate to / user / hdfs as below: Good news the example.csv file is present.

How to read json file in pyspark? Projectpro

Using spark.read.json (path) or spark.read.format (json).load (path) you can read a json file into a spark dataframe, these methods take a hdfs path as an argument. How to read a csv file from hdfs using pyspark? Web in my previous post, i demonstrated how to write and read parquet files in spark/scala. Web let’s check that the file has been.

Web Filesystem Fs = Filesystem.

To do this in the ambari console, select the “files view” (matrix icon at the top right). Web reading a file in hdfs from pyspark 50,701 solution 1 you could access hdfs files via full path if no configuration provided. How to read a csv file from hdfs using pyspark? Web how to read a file from hdfs?

Web How To Read And Write Files From Hdfs With Pyspark.

The path is /user/root/etl_project, as you've shown, and i'm sure is also in your sqoop command. Reading csv file using pyspark: Good news the example.csv file is present. Web write & read json file from hdfs.

Web In My Previous Post, I Demonstrated How To Write And Read Parquet Files In Spark/Scala.

Web the input stream will access data node 1 to read relevant information from the block located there. Web 1 answer sorted by: Read from hdfs # read from hdfs df_load = sparksession.read.csv ('hdfs://cluster/user/hdfs… Get a sneak preview here!

Spark Provides Several Ways To Read.txt Files, For Example, Sparkcontext.textfile () And Sparkcontext.wholetextfiles () Methods To Read Into Rdd And Spark.read.text () And Spark.read.textfile () Methods To Read.

Reading is just as easy as writing with the sparksession.read… Using spark.read.json (path) or spark.read.format (json).load (path) you can read a json file into a spark dataframe, these methods take a hdfs path as an argument. Web let’s check that the file has been written correctly. Navigate to / user / hdfs as below: