Read Delta Table Into Dataframe Pyspark

Read Delta Table Into Dataframe Pyspark - Web june 05, 2023. Index_colstr or list of str, optional,. Web read a delta lake table on some file system and return a dataframe. Web write the dataframe out as a delta lake table. To load a delta table into a pyspark dataframe, you can use the. Web read a delta lake table on some file system and return a dataframe. This guide helps you quickly explore the main features of delta lake. You can easily load tables to. Web write the dataframe into a spark table. From pyspark.sql.types import * dt1 = (.

If the delta lake table is already stored in the catalog (aka. Index_colstr or list of str, optional,. Web june 05, 2023. This guide helps you quickly explore the main features of delta lake. Azure databricks uses delta lake for all tables by default. Web write the dataframe into a spark table. In the yesteryears of data management, data warehouses reigned supreme with their. If the delta lake table is already stored in the catalog (aka. Web here’s how to create a delta lake table with the pyspark api: Web read a spark table and return a dataframe.

Web import io.delta.implicits._ spark.readstream.format (delta).table (events) important. # read file(s) in spark data. Web pyspark load a delta table into a dataframe. To load a delta table into a pyspark dataframe, you can use the. This tutorial introduces common delta lake operations on databricks, including the following: Web create a dataframe with some range of numbers. Web write the dataframe into a spark table. If the delta lake table is already stored in the catalog (aka. If the schema for a delta table. Web read a spark table and return a dataframe.

PySpark Read JSON file into DataFrame Blockchain & Web development

Web read a delta lake table on some file system and return a dataframe. It provides code snippets that show how to. This guide helps you quickly explore the main features of delta lake. Web in python, delta live tables determines whether to update a dataset as a materialized view or streaming table. Web create a dataframe with some range.

How to parallelly merge data into partitions of databricks delta table

Web is used a little py spark code to create a delta table in a synapse notebook. Web write the dataframe out as a delta lake table. This guide helps you quickly explore the main features of delta lake. Web create a dataframe with some range of numbers. Dataframe.spark.to_table () is an alias of dataframe.to_table ().

PySpark Pivot and Unpivot DataFrame Pivot table, Column, Example

Web write the dataframe out as a delta lake table. If the schema for a delta table. From pyspark.sql.types import * dt1 = (. If the delta lake table is already stored in the catalog (aka. Web read a delta lake table on some file system and return a dataframe.

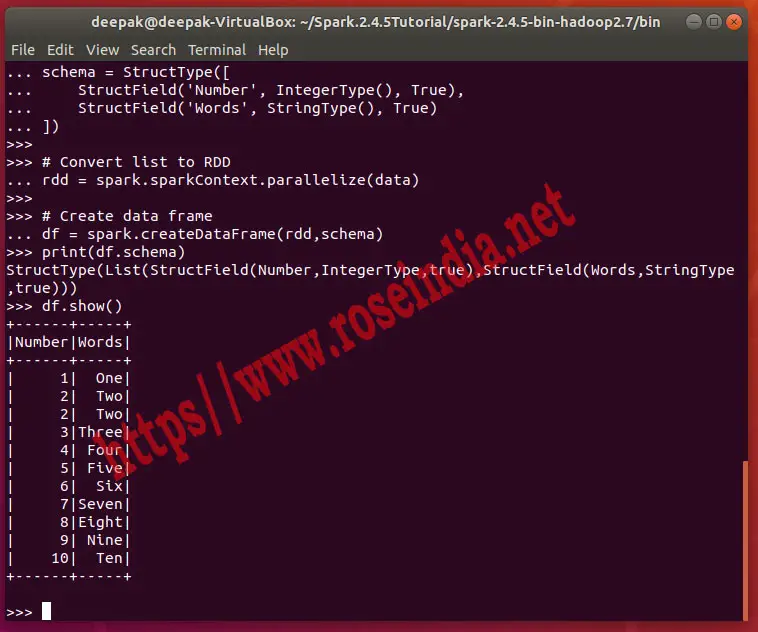

With PySpark read list into Data Frame

It provides code snippets that show how to. Web create a dataframe with some range of numbers. If the delta lake table is already stored in the catalog (aka. Web write the dataframe into a spark table. If the schema for a delta table.

68. Databricks Pyspark Dataframe InsertInto Delta Table YouTube

# read file(s) in spark data. Web read a delta lake table on some file system and return a dataframe. Web read a table into a dataframe. Web june 05, 2023. Web write the dataframe out as a delta lake table.

PySpark Create DataFrame with Examples Spark by {Examples}

You can easily load tables to. If the delta lake table is already stored in the catalog (aka. Web june 05, 2023. Web read a spark table and return a dataframe. Index_colstr or list of str, optional,.

Losing data formats when saving Spark dataframe to delta table in Azure

This tutorial introduces common delta lake operations on databricks, including the following: # read file(s) in spark data. Web read a delta lake table on some file system and return a dataframe. In the yesteryears of data management, data warehouses reigned supreme with their. Web write the dataframe out as a delta lake table.

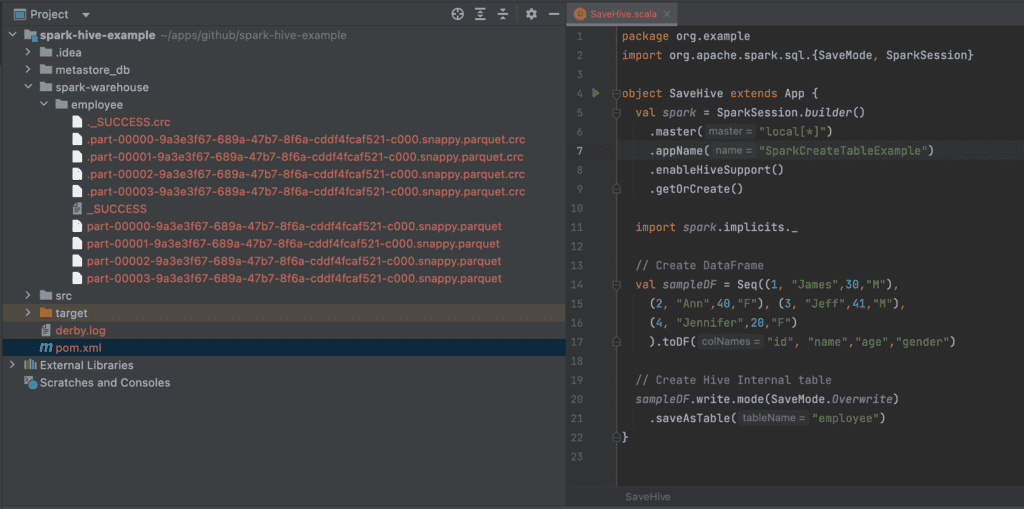

Spark SQL Read Hive Table Spark By {Examples}

This tutorial introduces common delta lake operations on databricks, including the following: To load a delta table into a pyspark dataframe, you can use the. Web read a delta lake table on some file system and return a dataframe. If the schema for a. Web import io.delta.implicits._ spark.readstream.format(delta).table(events) important.

How to Read CSV File into a DataFrame using Pandas Library in Jupyter

Web create a dataframe with some range of numbers. Web import io.delta.implicits._ spark.readstream.format (delta).table (events) important. Databricks uses delta lake for all tables by default. If the delta lake table is already stored in the catalog (aka. If the delta lake table is already stored in the catalog (aka.

Web Import Io.delta.implicits._ Spark.readstream.format(Delta).Table(Events) Important.

Web write the dataframe out as a delta lake table. In the yesteryears of data management, data warehouses reigned supreme with their. Web create a dataframe with some range of numbers. It provides code snippets that show how to.

Web Is Used A Little Py Spark Code To Create A Delta Table In A Synapse Notebook.

Web in python, delta live tables determines whether to update a dataset as a materialized view or streaming table. If the schema for a. Web here’s how to create a delta lake table with the pyspark api: Web write the dataframe into a spark table.

# Read File(S) In Spark Data.

This tutorial introduces common delta lake operations on databricks, including the following: Web june 05, 2023. Web read a delta lake table on some file system and return a dataframe. Databricks uses delta lake for all tables by default.

If The Schema For A Delta Table.

Dataframe.spark.to_table () is an alias of dataframe.to_table (). Index_colstr or list of str, optional,. Web read a delta lake table on some file system and return a dataframe. If the delta lake table is already stored in the catalog (aka.