Read Parquet Pyspark

Read Parquet Pyspark - Web similar to write, dataframereader provides parquet() function (spark.read.parquet) to read the parquet files from the amazon s3. Web dataframereader is the foundation for reading data in spark, it can be accessed via the attribute spark.read. I wrote the following codes. Parquet is columnar store format published by apache. Web write and read parquet files in python / spark. From pyspark.sql import sqlcontext sqlcontext. Web write a dataframe into a parquet file and read it back. Web the pyspark sql package is imported into the environment to read and write data as a dataframe into parquet file. Web 11 i am writing a parquet file from a spark dataframe the following way: Pyspark read.parquet is a method provided in pyspark to read the data from.

Web 11 i am writing a parquet file from a spark dataframe the following way: Web write a dataframe into a parquet file and read it back. Web write and read parquet files in python / spark. Parquet is columnar store format published by apache. From pyspark.sql import sqlcontext sqlcontext. Web pyspark provides a simple way to read parquet files using the read.parquet () method. >>> >>> import tempfile >>> with tempfile.temporarydirectory() as d:. Web configuration parquet is a columnar format that is supported by many other data processing systems. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. I wrote the following codes.

Web introduction to pyspark read parquet. I have searched online and the solutions provided. Web 11 i am writing a parquet file from a spark dataframe the following way: Pyspark read.parquet is a method provided in pyspark to read the data from. Web configuration parquet is a columnar format that is supported by many other data processing systems. Web how to read parquet files under a directory using pyspark? Web write and read parquet files in python / spark. Web the pyspark sql package is imported into the environment to read and write data as a dataframe into parquet file. From pyspark.sql import sqlcontext sqlcontext. Web pyspark provides a simple way to read parquet files using the read.parquet () method.

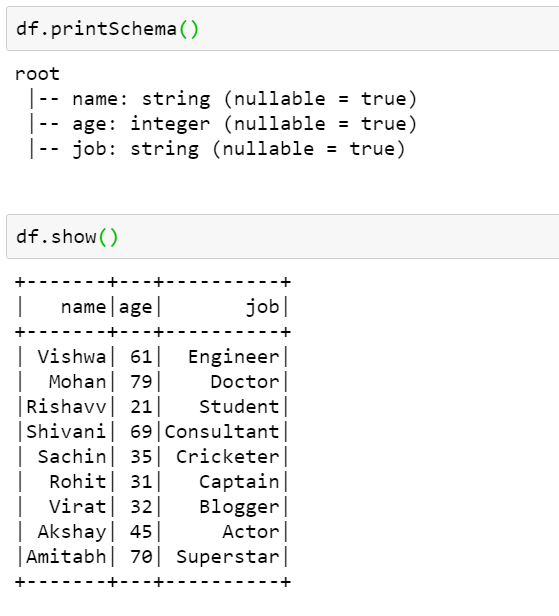

How to read a Parquet file using PySpark

Web similar to write, dataframereader provides parquet() function (spark.read.parquet) to read the parquet files from the amazon s3. Web dataframereader is the foundation for reading data in spark, it can be accessed via the attribute spark.read. From pyspark.sql import sqlcontext sqlcontext. Web pyspark provides a simple way to read parquet files using the read.parquet () method. I wrote the following.

How To Read A Parquet File Using Pyspark Vrogue

Web pyspark provides a simple way to read parquet files using the read.parquet () method. From pyspark.sql import sqlcontext sqlcontext. Parquet is columnar store format published by apache. Web write and read parquet files in python / spark. Web configuration parquet is a columnar format that is supported by many other data processing systems.

How to read Parquet files in PySpark Azure Databricks?

From pyspark.sql import sqlcontext sqlcontext. Parquet is columnar store format published by apache. Web write and read parquet files in python / spark. >>> >>> import tempfile >>> with tempfile.temporarydirectory() as d:. I wrote the following codes.

PySpark Read and Write Parquet File Spark by {Examples}

Web similar to write, dataframereader provides parquet() function (spark.read.parquet) to read the parquet files from the amazon s3. From pyspark.sql import sqlcontext sqlcontext. Web introduction to pyspark read parquet. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Web configuration parquet is a columnar format that is supported by many.

How to read and write Parquet files in PySpark

Parquet is columnar store format published by apache. >>> >>> import tempfile >>> with tempfile.temporarydirectory() as d:. I have searched online and the solutions provided. Web 11 i am writing a parquet file from a spark dataframe the following way: Web write pyspark dataframe into specific number of parquet files in total across all partition columns to save a.

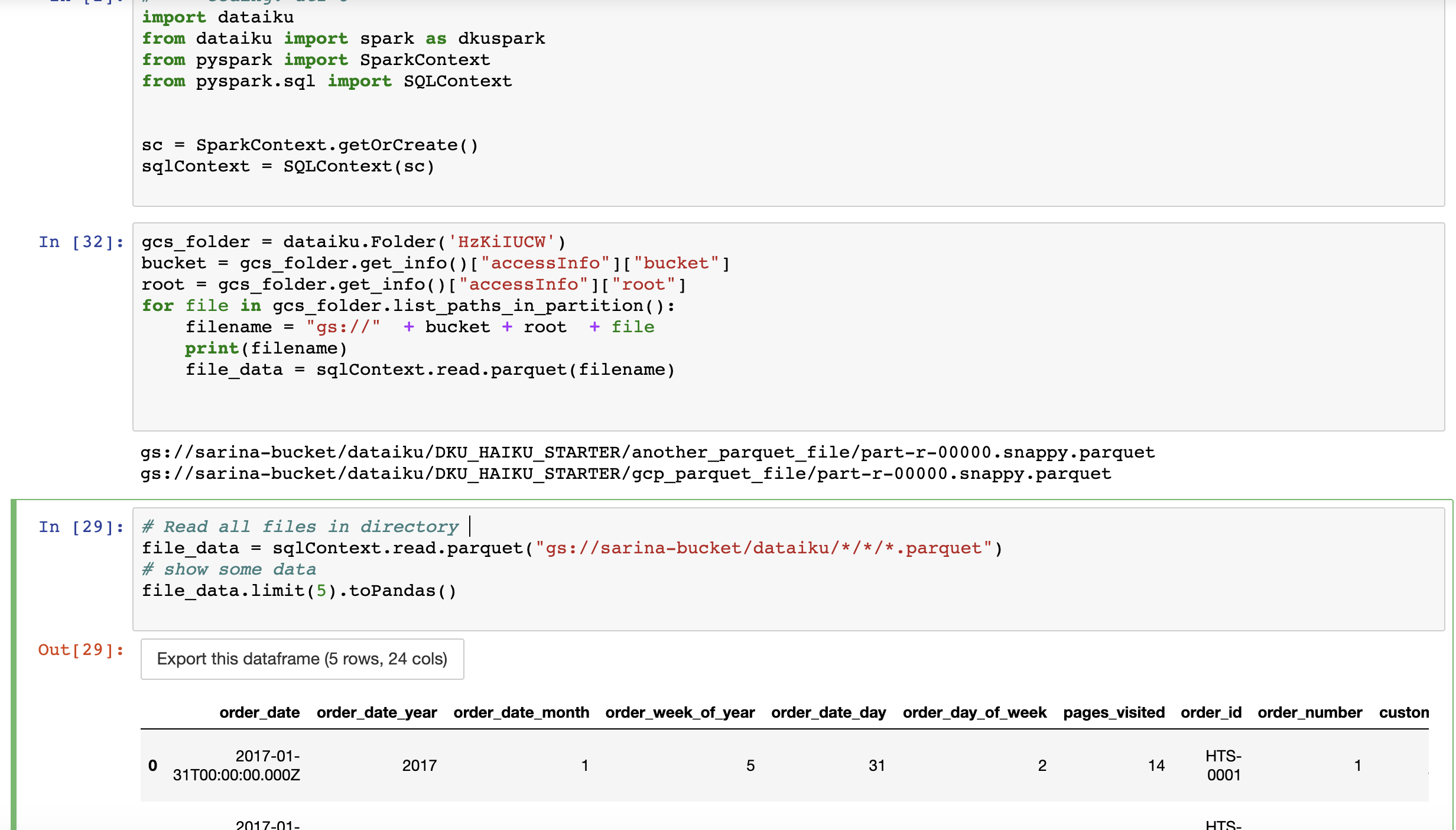

Solved How to read parquet file from GCS using pyspark? Dataiku

Web the pyspark sql package is imported into the environment to read and write data as a dataframe into parquet file. Web write pyspark dataframe into specific number of parquet files in total across all partition columns to save a. Web pyspark provides a simple way to read parquet files using the read.parquet () method. Web configuration parquet is a.

How To Read Various File Formats In Pyspark Json Parquet Orc Avro Www

From pyspark.sql import sqlcontext sqlcontext. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Web 11 i am writing a parquet file from a spark dataframe the following way: I have searched online and the solutions provided. Web i want to read a parquet file with pyspark.

[Solved] PySpark how to read in partitioning columns 9to5Answer

From pyspark.sql import sqlcontext sqlcontext. Parquet is columnar store format published by apache. Web configuration parquet is a columnar format that is supported by many other data processing systems. Web i want to read a parquet file with pyspark. Web similar to write, dataframereader provides parquet() function (spark.read.parquet) to read the parquet files from the amazon s3.

How To Read A Parquet File Using Pyspark Vrogue

Web i want to read a parquet file with pyspark. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Web pyspark provides a simple way to read parquet files using the read.parquet () method. Web dataframereader is the foundation for reading data in spark, it can be accessed via the.

PySpark read parquet Learn the use of READ PARQUET in PySpark

Web apache spark january 24, 2023 spread the love example of spark read & write parquet file in this tutorial, we will learn what is. Web i want to read a parquet file with pyspark. Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Web configuration parquet is a columnar.

Web Dataframereader Is The Foundation For Reading Data In Spark, It Can Be Accessed Via The Attribute Spark.read.

I have searched online and the solutions provided. Web the pyspark sql package is imported into the environment to read and write data as a dataframe into parquet file. I wrote the following codes. From pyspark.sql import sqlcontext sqlcontext.

Web Write A Dataframe Into A Parquet File And Read It Back.

Web pyspark provides a simple way to read parquet files using the read.parquet () method. Web write pyspark dataframe into specific number of parquet files in total across all partition columns to save a. Parquet is columnar store format published by apache. Web 11 i am writing a parquet file from a spark dataframe the following way:

Web Write And Read Parquet Files In Python / Spark.

Web apache spark january 24, 2023 spread the love example of spark read & write parquet file in this tutorial, we will learn what is. >>> >>> import tempfile >>> with tempfile.temporarydirectory() as d:. Pyspark read.parquet is a method provided in pyspark to read the data from. Web introduction to pyspark read parquet.

Web Similar To Write, Dataframereader Provides Parquet() Function (Spark.read.parquet) To Read The Parquet Files From The Amazon S3.

Web pyspark comes with the function read.parquet used to read these types of parquet files from the given file. Web configuration parquet is a columnar format that is supported by many other data processing systems. Web how to read parquet files under a directory using pyspark? Web i want to read a parquet file with pyspark.